Every marketer likes to think they have a better eye than most when it comes to recognizing good ad creative — myself included. It stands to reason that years and years in this industry would teach us a thing or two about what works and what doesn’t: which words to use and to avoid, how to shoot the product, where to put the logo (and how big to make it), etc. These are all insights we assume we learn to intuit throughout our careers and can therefore apply as we review ads before they are released into the wild.

As a platform for testing ad creative, our team witnesses the downfall of this assumption every single day. Ads our customers had been running for years turn out to be so-so performers. They’re bested by a slightly different variation they never would have tried if not for multivariate testing — sometimes by as much as 53x!

This got us thinking. Just how often are we wrong? How often do we hinder potential ad performance by letting our preferences and flawed intuition get in the way?

To find out, we put five sets of two ads in front of over 750 marketing professionals — from creatives to performance marketers to CMOs — and asked them to guess which of the two was the higher performer.

To show you what they were up against, here’s a recreation of the original quiz. See how many you can guess correctly. Then read on to see what we found.

RESEARCH RESULTS

When it comes to trusting marketers’ creative intuition, you’re almost better off flipping a coin.

Sad but true. Our study found that the average marketing professional can only predict winning digital ad creative 52% of the time. Even sadder, but still true, is the fact that marketers actually fared worse than non-marketers who were able to predict the winners 53% of the time.

Only 4.7% of marketing professionals (who are clearly sorcerers) managed to guess all five winning ads correctly. This is only slightly better than those who did not identify themselves as marketers. Just 4.3% of that group were only able to guess all five correctly.

The inability to predict ad creative performance is universal across marketing roles.

Each ad set proved most difficult for a different marketing title to predict. To see what I mean, let’s walk through each ad set and its results individually.

SolaWave

Wavy line vs. straight line

This set had respondents completely torn, with a near-perfect 50/50 split (49.94% for wavy vs. 50.06% for straight). Performance Marketers had the toughest time with this set, with only 46% of them choosing the winning ad.

Taylor Stitch

Product on model vs. product without model

This set was a tricky one. Only 47% of respondents picked the winner. Digital Marketing Managers fared the worst here. Only 36% of them identified the winning ad. Anecdotally, some who turned to Twitter after completing the quiz said this one “went against everything they’d learned.”

Acadia

"Grow your career" CTA vs. "We're hiring" CTA

Even though the winning ad in this set was the one guessed correctly most often, it was still only guessed correctly by 65% of respondents. Interestingly, CMOs and VPs of Marketing had the hardest time with this set, only guessing the correct ad 59% of the time.

Cactus Credit

Half circle credit meter vs. near-full circle credit meter

This set tripped up respondents the most. On average, only 41% of them were able to predict the winner. Advertising Managers had the hardest time with this one, with only 32% of them guessing the winning ad.

She's Birdie

Product positioned vertically vs. product positioned diagonally

Respondents only guessed the winning ad from this set 57% of the time, on average. Creatives actually had the hardest time with this one. Only 48% of them were able to pick the winner.

There are only two points in a marketer’s career where they are above average (but still bad) at predicting winning ad creative.

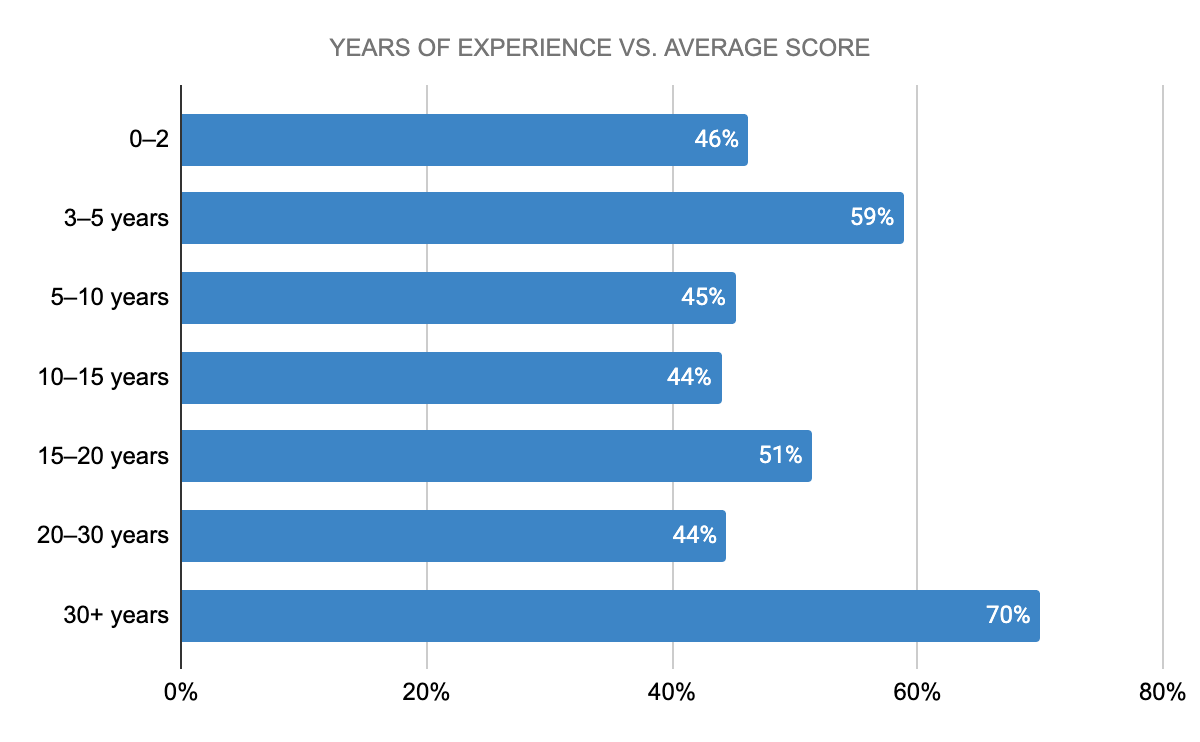

Shortly after the first quiz ended, we ran a second quiz with all new ad creative. This time around, we were interested to see whether or not those with more years of experience in marketing are better at predicting winning ads.

Turns out, mid-career marketers are the worst predictors. Those in the industry for 10–15 years and 20–30 years scored lowest, only guessing the higher-performing ad 44% of the time.

Only those with 3–5 years and 30+ years of experience scored above average, correctly guessing the winning ad creative 59% and 70% of the time, respectively. And before we go congratulating them, let’s remember: if this were a graded test, only the most experienced marketers would have passed — with a C.

The need for ad testing and creative intelligence is critical.

As marketers, we tend to believe it’s our job to know conversion-driving ad creative when we see it. But that’s just not true. Our job is to iterate, test, and find out what ad creative will convert. (How’s that for taking some of the pressure off?)

Truth is, testing your ad creative is the only way to undoubtedly know it will perform. Today’s most innovative marketers are going beyond traditional testing, using multivariate testing to find out not only which ad is their top performer, but also which creative elements — images, copy, CTAs, offers, and more — are top performers. Besides just knowing what will work, we now need to know why. This is the future of digital advertising: better performance through granular creative intelligence.

So let’s make a pact, shall we? Let’s remember that we’re all just human. Let’s never assume we know what will win. Let’s CTRL + ALT + DEL bias right out our ads by testing everything. And let’s drive our businesses forward through better, data-backed creative — together.