A/B testing — which measures the performance of two or more markedly different creative concepts against each other — has long been the standard in testing ad creative. It’s the traditional way brands decide which ad or ad campaign to run against their media buy. Today, many brands usually run two or three ad concepts through some form of automated A/B testing before deploying their creative live.

But A/B testing leaves a lot to be desired in today’s advertising landscape. Audience targeting has become diluted, social platform and ad network algorithms change sporadically, and brands and agencies are constantly being pushed to do more with less.

Better ad creative — nay, the best possible ad creative — is the fastest way around these major market shifts. So while A/B testing isn’t obsolete (any testing is better than none at all), it does not provide the level of data marketers needed to build “one ad to rule them all.”

How do we get to this deeper level of creative data? Through multivariate testing, an approach that measures the performance of every possible combination of creative variables: images, headlines, logo variations, calls to action, etc.

Let's go deeper into a/b testing vs. multivariate testing. Here are four major reasons A/B testing alone is no longer adequate for crafting top-performing ad creative — and how adding multivariate testing to the mix can help you get there.

A/B testing only tells you which ad performed better. Multivariate testing tells you why.

The greatest shortcoming of A/B testing is the sheer number of variables that are uncontrolled. Each ad concept in the test is typically wildly different from the others. After all, with just two or three chances to find a winner, you want options that have very little in common, right?

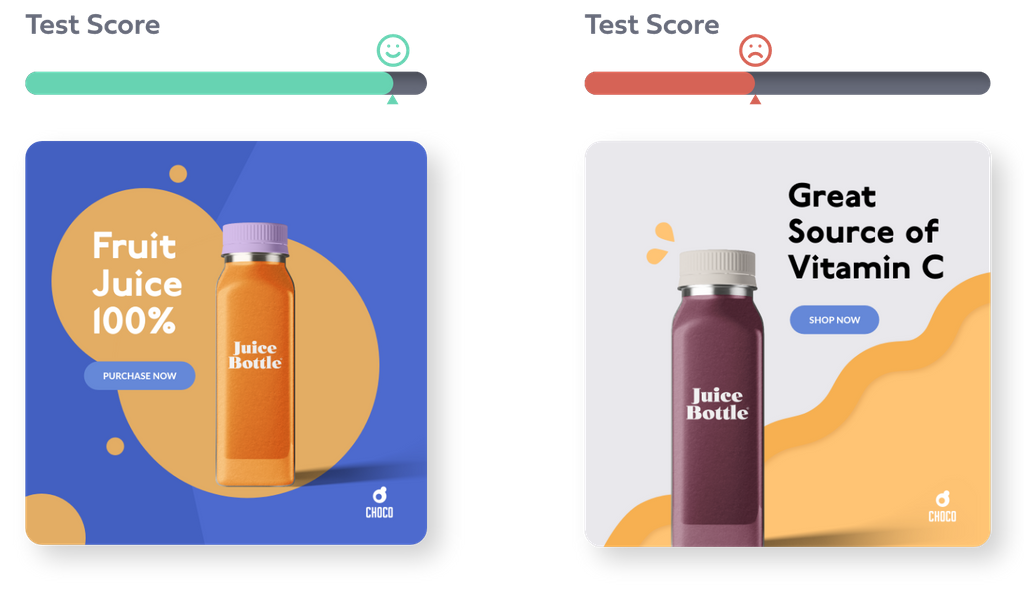

Because there are so many variables, there’s no telling why one worked over another. Take the three ads below for example. We can see the ad on the far left was the winner. But it could be for a number of reasons.

Maybe audiences liked that the juice bottle was front and center. Maybe they liked that particular shade of blue background. Maybe it’s the fact that the juice in the bottle was orange. Or maybe “Purchase now” was just a really strong CTA.

You get where I’m going with this — we have no idea what in particular drew our audience to that ad most often.

Multivariate testing, on the other hand, pinpoints exactly which parts of an ad sparked performance — this is the why.

Let’s look at this multivariate test as an example:

This multivariate test was run with nine variables: three products, three images, and three headlines. Because all of these variables were paired with every other variable, we can see not only which ad performed the very best but also which variables were winners. We know our winning ad won because the majority of our audience preferred that specific combination of creative elements. Below are the top-line results of this test, ranking ads and creative elements in order of CTR performance.

This is the magic of multivariate testing. Understanding why an ad is successful allows brands to drive broad insights down into granular details that will fully optimize their ad creative.

A/B testing only delivers short-term performance data. Multivariate testing builds a brand library of creative intelligence.

The only thing an A/B test can really tell you is how each ad performed against the others. Ad A drove 22% more purchases than ad B, for example. But if you don’t know why an ad worked, there’s very little you can definitively say “works all the time” for your brand.

One of the greatest benefits of investing in multivariate testing is it serves as a proving ground for your entire brand. Not only do the insights learned here pertain to your ad creative, but they can also be applied holistically to all your properties:

- Winning messaging can be applied to anything from your home page to your audio ads

- Winning images can be used to inform future photoshoots

- Winning colors can be used in your packaging designs

Additionally and importantly, this library of creative intelligence allows you to see how the efficacy of creative assets evolves as you test them over time. Perhaps one version of your logo is the strongest for months until a different logo version begins to gain traction. The value of this knowledge is exponential. Understanding what your customers like about your ads can help you shape your brand for them in real-time while continuously adding to a lifetime view of your strongest creative elements.

A/B testing keeps creatives and marketers in their own silos. Multivariate testing aligns them as strategic partners.

When only a handful of disparate ad concepts are needed for testing, and without a consistent feedback loop of evolving creative data, those responsible for concepting and designing ads don’t have any real strategic reason to work in lockstep with those responsible for testing and reporting on those ads.

Marketers get very little insight into the creative process and creatives receive very few learnings that can be applied to future ad creative. Each testing cycle is self-contained in nature — no previous learnings to be applied, and no new learnings to carry forward.

Multivariate testing builds a continuous loop of creative intelligence that inherently brings these two functions together:

- The performance team builds a testing strategy the creative team can bring their best ideas to

- The creative team concepts and generates ads for testing

- The performance team runs a multivariate test and relays the full set of intelligence back to the creative team

- The creative team uses this intelligence to ideate and design new assets and ad variants

- This virtuous cycle repeats

The success of these two teams becomes mutual and ongoing rather than a never-ending series of one-offs accomplished in silos.

A/B testing only measures a few ad variants. Multivariate testing is built for scale.

Scale is not something A/B testing is known for. Think about the entire process: a team of creatives spends weeks ideating and designing just two or three concepts, the test is run, you receive limited learnings, and because of the shallow nature of the data, you’re unsure what to test next. When you think about the money put behind that process you quickly realize how small the return is.

Multivariate testing, on the other hand, is the Thunderdome of testing ad creative. Tens of creative assets and possibly hundreds of ad variations enter. The best emerge victoriously. Your team is able to analyze the impact of each individual asset and improve future performance with the results.

The law of probability is at play here. The greater the number of ads tested, the more likely you are to find a winning variation.

The ability to test at scale also allows you to identify, explore, and retest creative changes that lead to incremental performance improvements. Sometimes one simple change can blow up conversion rates unexpectedly.

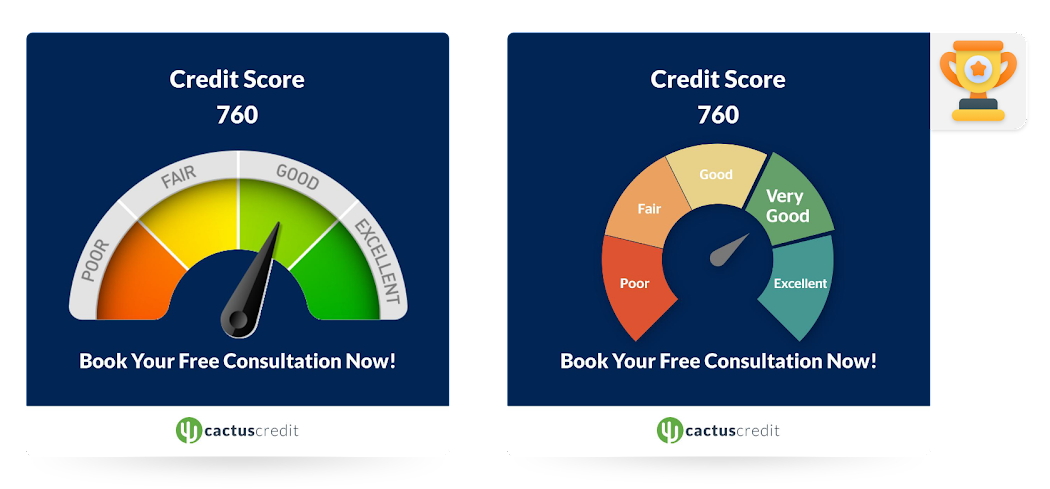

For example, take the two ads below, which were part of a multivariate test containing 12 total ad variants. Half of the ads featured a half-circle-shaped credit meter, while the other half featured a nearly full circle shape. The full circle graphic generated 53x the leads — a result that never would have been discovered through A/B testing alone.

It’s time to build multivariate testing into your marketing strategy.

Multivariate testing and A/B testing are both valid testing methods to learn something about your ad creative. They both test ads against each other, and they both measure how well ad creative performs against a goal (conversions, engagement, etc.).

Together, A/B testing and multivariate testing are a powerful pair. The first tells you which ad concept works best. The second tells you which possible combination of creative elements and variants within that concept drives the greatest increase in conversions.

But in today’s advertising ecosystem, A/B testing alone will no longer cut it. Using multivariate testing is the only way to derive the micro-level insights marketers need to boost ad performance — and stay ahead of the competition.