You can run ads in your sleep. Your creative is hitting benchmarks. But you want to turn things up a notch.

Enter, creative testing: a process to discover which ads and creative elements drive performance more than others.

Creative testing can build data-driven support around ad campaign before it even begins. By testing a mix of creative elements within your ads, you can determine which combination is most likely to convert at scale.

But, this process has become more complex than ever. Common questions include:

- How many ads should be tested at once?

- Which platform(s) should I test my ads on?

- Which audience(s) should I test my ads against?

- How long should I run my creative test?

- How much budget should I put behind my creative test?

Have we got some best practices for you. Follow these tips for more effective and efficient creative testing — and up the odds of finding top-performing ads and creative assets.

Creative testing requires serious management

To get accurate, impactful results out of your creative testing, there is a multitude of factors you need to consider before you start.

Variable management

Once ad designs have been approved for testing, take a strategic look at the components within them. Each of them — the copy, the images, the colors, the fonts — can impact performance one way or another.

Here are two great examples.

SolaWave, a direct-to-consumer skincare company, tested eight total ad variants in this experiment. Half of them featured a wavy line down the middle and the other half featured a straight line. The straight line resulted in a 350% increase in add-to-cart rate.

In this example, credit-building service Cactus Credit tested 12 ad variants. Half of them featured a half-circle-shaped credit meter, while the other half featured a nearly full circle shape. The full circle graphic generated — wait for it — 53x the leads.

It's important to carefully manage these variables by keeping track of all the different elements you're testing and ensuring that they're consistent across all versions of your ads.

For example, if you're testing headlines, make sure that the rest of the ad (e.g., image, CTA, etc.) is the same in all versions. Otherwise, you won't be able to accurately identify which element is causing any observed changes in performance.

When you're first starting out, keep things simple. Test a small number of creative elements at first, then increase the number of elements as you become more comfortable with the process.

Data management

Tracking your creative testing data is essential to making informed decisions about your results. Organize your test data in a way that lets you use the insights gleaned from current and past tests to help form the basis of your future ad creative.

This can be done manually with a spreadsheet by using some very specific, detailed naming conventions and aligning each ad with its corresponding data.

Here’s an example naming convention template:

campaign_audience_image_headline_CTA_postcopy

And here it is in practice:

ProspectingV1_Broad_ManWalking_TakeATrip_SignUp_FreeTripWithPurchase

While this may be a bit tricky to initially set up, it is worth it to have all your data organized and stored neatly in one place. That way, you make insightful metrics-backed decisions when designing new ad creative.

Budgeting

It’s the number one question we get from marketers:

“How much should I spend on ad testing?”

There are plenty of factors to consider, including:

- Your overall media budget

- Your testing strategy

- Your goals and KPIs

- The number of ad variants you want to test

A good rule of thumb is to spend 10–20% of your overall media budget on creative testing. This allows you to pinpoint your top-performing ads and creative assets. Then, by scaling the top performers from your ad tests, your remaining 80–90% will work that much harder for you.

The benefits of creative testing don’t stop with the instant lift in performance and ROAS. Testing ad creative is one of the most efficient ways to increase ad ROI because there are plenty of long-term, unseen gains as well, like:

- Time saved debating creative decisions

- Time saved waiting for data

- Money saved by not running low-performing ads

- Money saved from building a micro-level library of creative intelligence

Creative testing best practices

Test one variable at a time

It’s crucial to change and test one variable at a time in your ad creative. Otherwise, it’s impossible to know what exactly caused a shift in performance.

Knowing that a specific headline, color, or image is a consistent driver of performance is a powerful insight. You could use that winning element across other branded touchpoints.

Here’s a great example. Audio agency Oxford Road tested static image ads for their client, Moink (a subscription box of farm-raised meats). An image of bacon increased clicks more than any other image — outperforming steak by 15% and chicken by 32%. Oxford Road used this insight to update the offer in Moink’s audio ads to “free bacon for a year,” subsequently dropping the brand’s audio ad CPA by 67%.

Testing one variable at a time also lets you explore testing that variable deeper to find incremental performance gains. For example, if you found that women with dark hair performed best in your ad creative, you could test multiple images of women with dark hair to find if any of them could best the original.

This practice is nearly impossible to apply with traditional testing methods and mind-bogglingly tedious to try manually.

Give special attention to messaging

Messaging is the easiest variable to test (it’s much faster to think up a new headline than to shoot a new image, for example). Plus, messaging can have an enormous impact on performance. CTAs and headlines are a great place to start.

CTAs

CTAs should be short and sweet. So it’s usually pretty easy to whip up a handful for testing. The trick is to say the same thing in as many ways as possible and imply a sense of urgency. Here are a few examples based on different KPIs.

- CTAs to drive purchase

- Shop now

- Buy now

- Shop the collection

- Shop the latest

- Get it before it’s gone

- CTAs to generate leads

- Sign up now

- Schedule a demo

- Chat with our team

- Get on the list

- Apply now

- CTAs to generate clicks

- Get more info

- Learn more

- Discover [product/service/brand name]

- See what’s new

- Get started

Here’s a great CTA test example performed by our customer Acadia.

Eight total ad variants were tested, half of which featured “Grow your career” and half of which featured, “We’re hiring.” The more concrete “We’re hiring” had a 170% higher click-through rate.

Headlines

Just like CTAs, writing a handful of headlines for multivariate testing is a relatively light lift. To make it even easier for you, I came up with some fill-in-the-blank headline formulas you can use right now. (For each one, I’ve also supplied a headline written for Marpipe so you can see an example.)

These headlines are solution-focused. So think about what intangibles your product or service offers potential customers when writing them.

- [Verb] [ideal state] without [painpoint]

Test 10x more ads without killing your creatives.

- [Feature] for [ideal state].

Granular creative data for building top-performing ads.

- Don’t just [traditional state]. [Ideal state].

Don’t just follow best practices. Find your own.

- [Verb] your way to [ideal state].

Test your way to top ad performance.

- [Ideal state] start(s) here.

Conversion-driving ad creative starts here.

Test different ad placements

In the world of paid social, placements determine how and where your ad is displayed to your audience. And there is no shortage of them — Facebook alone has more than 20 to choose from.

The format of your ad can have an enormous impact on its performance, even if the creative elements contained within are exactly the same. Resize and test your ad creative in different placements to see which ones are most likely to help you hit your goals.

Set specific goals

Before you jump headfirst into creative testing, take some time to understand what you hope to accomplish.

First, what do you want to learn from your creative test?

A well-thought-out hypothesis will help inform what variables — images, headlines, calls to action, etc. — should be tested in your ad creative.

Here are a few examples:

- I want to see which generates more conversions: images of my product being used by a model or images of the product by itself.

- I want to see if % off or $ off drives more clicks.

- I want to see if dark or light background colors generate more leads.

The goal is to learn one or two things per test. Decide and document what you want to learn. Then choose and test the creative assets that will help you arrive at an answer. A strong hypothesis leads to a more controlled experiment, which leads to clearer results.

Second, what metric will you measure to indicate success?

The way you measure performance will vary depending on your business goals, but some common KPIs are:

- cost-per-acquisition (CPA)

- cost-per-click (CPC)

- click-through rate (CTR)

- ad impressions

- leads generated

- return on ad spend (ROAS)

Understand your audience

The odds that the same ad creative will resonate with all your audience segments is low. For example, returning customers may convert more often when a discount is present. But a new customer may be more compelled by a lifestyle or sustainability message.

By showing the same ad creative to all your audience segments, you’re ignoring those nuances, potentially driving up your CPA and decreasing your ROAS.

Understanding your audience’s life stage, key motivators, and pain points is crucial to crafting ad creative they’ll find relatable and click-worthy. Proper creative testing is an incredibly efficient way to pinpoint those characteristics so you can take advantage of them as you test and scale.

Why we recommend multivariate testing

There are two predominant creative testing methods: multivariate testing (MVT) and A/B testing. Both are valid ways to learn something about your ad creative.

They both:

- Test ad creative against other ad creative

- Measure how well ad creative performs against a goal (conversions, engagement, etc.)

Where multivariate testing and A/B testing differ is in:

- The number of ads typically run in each test

- The state of the variables in each test

- The granularity of the results of each test

A/B testing measures the performance of two or more markedly different creative concepts against each other.

The greatest shortcoming of A/B tests is the sheer number of uncontrolled variables — each ad concept in the test is typically wildly different from the others. So while A/B testing can tell you which ad concept people prefer, it can’t tell you why.

Do they like the headline? Do they like the photo? With A/B testing, you never know. This is why A/B testing alone is no longer enough to achieve maximum performance.

Multivariate testing can break down those details for you, though. Multivariate testing (MVT) measures the performance of every possible combination of creative variables.

Variables are any single element within an ad — images, headlines, logo variations, calls to action, etc.

Because you can measure how every variable works with every other variable, you are able to understand not only which ads people love and dislike the most, but also exactly which variables people love or dislike the most in aggregate. Instead of seeing the performance of those variables in isolation, you see how they perform no matter what they were paired with in the ad. You can use this micro-level of creative data to optimize your ad creative for peak performance.

The true value of multivariate testing lies in the depth of creative intelligence it delivers, and the ability to use those learnings in future creative versions. If you knew that a certain color or image or CTA consistently drove more people to purchase, you could capitalize on that knowledge to optimize your ads until a new winner emerged.

For example, let’s say while concepting an ad campaign, your creative team came up with the following:

- 4 headlines

- 3 images

- 2 background colors

- 3 calls to action

To run a multivariate test against these assets, you’d build an ad for every possible combination — all 72 of them (4x3x2x3) — and test them against each other.

Perhaps you find that one headline outperforms the other three, and one image doubles the click-through rate. You would be sure to use that headline and image in future ad creative.

Marpipe’s approach to creative testing

Marpipe automates every step of the multivariate test process — from design to data analysis. This lets you zero in on both the ads and creative elements that drive conversions faster. Brands that test their ad creative on Marpipe have seen some incredible results — we’re talking 53x the lead gen and 127% ROAS. Here are some of the features that set our platform apart:

Modular design approach

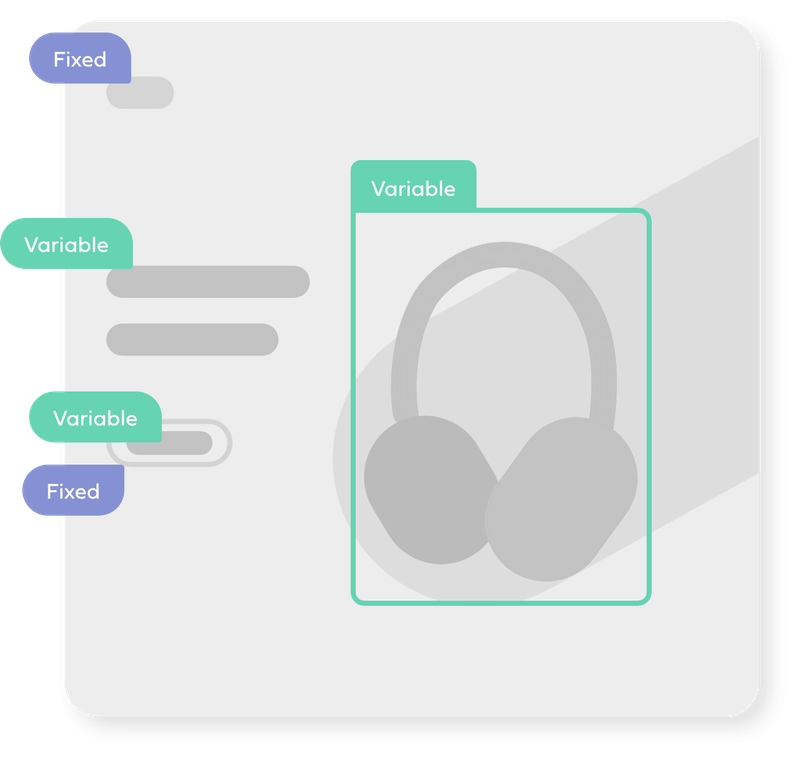

Modular design is a design approach that uses placeholders within a template to hold space for creative elements to live interchangeably. It's a foundational pillar of designing ads at scale on Marpipe, and what allows each design element to be paired with all other design elements programmatically. This gives you total control over all your variables for an effective test.

Within Marpipe, you can break your placeholders down into two types:

- Variable: Any placeholder for an asset you’re going to test . These assets should be interchangeable with one another. In other words, you can swap any asset of the same type into the placeholder and the design still works.

- Fixed: Any placeholder staying the same across every ad variation. These assets should work cohesively with your variable assets that are being swapped in automatically. One common example of a fixed asset is your brand logo. It typically stays the same size, color, and location in each variation.

Placement Variants

Designing ad creative to appear in every possible placement size — while still keeping its intrinsic content intact — is an extremely time-consuming process when done manually.

Placement Variants lets you design ad variations in multiple sizes all at once. All of the placement variations you edit will be available when launching your test.

This is a huge timesaver for creative teams. By creating one ad, you’re actually creating ads in every size you could possibly need — without having to constantly start from scratch.

Built-in Confidence Meter

Many advertisers prefer their ad tests to reach statistical significance — or stat sig — before considering them reliable. (Stat sig refers to data that can be attributed to a specific cause and not to random chance.)

Marpipe is is the only automated multivariate creative testing platform with a built-in live statistical significance calculator. We call it the Confidence Meter.

In real-time, it helps you understand:

- whether or not a variant group has reached high confidence

- if further testing for a certain variant group is necessary

- whether repeating the test again would result in a similar distribution of data

- when you have enough information to move on to your next test

Template library

Your ad template is the controlled container inside of which all your variables will be tested. It should be flexible enough to accommodate every creative element you want to test, and yet still make sense creatively no matter the combination of elements inside. (This is where modular design principles become super important!)

Marpipe has more than 130 pre-built modular templates for you to choose from in our library — all based on top-performing ads. Or you can build your own. To make sure your template exhibits the look and feel of your brand, you can upload brand colors, fonts, and logos right into Marpipe for all your tests.

Test budgeting made simple

Your overall ad creative testing budget will determine:

- how long you run your test

- your budget per ad group

The larger the per-test budget, the more variables you can include per test.

Marpipe shows you how your budget breaks down before you launch your ad test. So if you create more variants than you have creative testing budget for, you can simply remove elements and shelve them for a future test. (Vice versa: if you find you haven’t included enough variants to hit your allotted creative testing budget, you can add variables until you do.)

Marpipe also places every ad variant into its own Facebook ad set, each with its own equal budget. This prevents the platform algorithm from automatically favoring a variant and skewing your test results.

Take your creative testing to the next level

Even if your ad creative is checking all the boxes, creative testing can help you unlock performance opportunities you didn’t even know were there. Automating multivariate testing with Marpipe can help you zero in on those opportunities faster.

Ready to scale your ads with confidence and use creative data to power advertising decisions? Book some time with an actual human on our team.

Relevant reads:

The definitive guide to modern creative testing