Your ad creative is often one of the first — or very first — encounters people have with your brand. It dictates how people feel about your product, not to mention whether people engage, click, and land on your website to make a purchase.

This is why testing your ad creative is crucial to growing your brand and business. If a certain color, image, or call to action was significantly more likely to drive purchase, wouldn't you want to know that?

Let’s dive deeper into the importance of testing your ad creative, the pros and cons of the two most popular testing methods, and best practices for testing your ad creative at scale.

Why do brands test their ad creative?

Testing your ad creative allows you to constantly improve your ad performance and get the most out of your advertising spend. You can learn what your target audience finds most relevant as measured by their engagement, clicks, and purchases.

For example, menswear brand Taylor Stitch saw a 66% decrease in cost-per-purchase when testing ads with and without models. The ad without a model shown here proved to be the winner.

And credit repair company Cactus Credit saw an enormous performance boost when testing different credit meter graphics. The full circle graphic in the ad on the right generated 53x the leads compared to the half circle on the left.

It's now more important than ever for brands to test their ad creative regularly. It used to be that you could throw a few pieces of creative out there, find a winner and run it into the ground. Buuuuut: the advent of user privacy protection measures — the disintegration of third-party cookies, iOS 14 ATT, GDPR, CCPA — has made traditional audience targeting increasingly diluted and unreliable. This also means it takes longer to learn what ad creative works on platforms like Facebook because they receive and provide much less data than before.

The less reliable the targeting, and the longer it takes to receive feedback means it’s more important than ever to have your creative spot-on. Here’s why:

You can no longer assume the best prospects are seeing your ad. With broader audiences come tons of people who simply don’t care about your brand or what it has to offer. Impressions become a nearly irrelevant metric because so many of them are potentially wasted.

Getting to your target fast is paramount. The longer it takes for your ads to cut through larger, generalized audiences to the subset you need to reach, the slower your ability to convert.

When conversions lag, growth stagnates.

The moral of the story? Cutting through broad audiences requires super-resonant ads. The key is finding the creative that calls to your demographic as efficiently as possible with your spend, as quickly as you can.

How can you know for sure your creative will work? It used to be that you could try and nail it in one go by putting out a bunch of different versions.

But now? Test as many ads as you can — as fast as you can — to find the highest-performing creative elements. In other words, move away from finding “winning ads” to finding “winning assets” you can pair together to make the best ads possible. This allows your team to make data-driven decisions about ad creative as opposed to using biased opinions or "creative intuition."

You can then use this data to build compounding ad performance — layering many conversion-driving insights into one ad and scaling it.

Let’s talk about this type of testing needed to get granular creative data like this, because it’s probably different than what you’re used to.

Multivariate testing vs A/B testing

There are two predominant ad testing methods: multivariate testing (MVT) and A/B testing. Both are valid ways to learn something about your ad creative.

They both:

- Test ad creative against other ad creative

- Measure how well ad creative performs against a goal (conversions, engagement, etc.)

Where multivariate testing and A/B testing differ is in:

- The number of ads typically run in each test

- The state of the variables in each test

- The granularity of the results of each test

A/B testing

A/B testing measures the performance of two or more markedly different creative concepts against each other. Long the standard in testing ad creative, this is historically the way brands decide which ad or ad campaign to run against their media buy. Today, many brands run their ad creative through some form of A/B testing before deploying their creative live.

But the greatest shortcoming of A/B tests is the sheer number of uncontrolled variables — each ad concept in the test is typically wildly different from the others. So while A/B testing can tell you which ad concept people prefer, it can’t tell you why.

Do they like the headline? Do they like the photo? With A/B testing, you never know.

Multivariate testing can break down those details for you, though. This is why A/B testing alone is no longer enough to achieve maximum performance.

Multivariate testing

Multivariate testing measures the performance of every possible combination of creative variables.

Variables are any single element within an ad — images, headlines, logo variations, calls to action, etc.

Because you can measure how every variable works with every other variable, you are able to understand not only which ads people love and dislike the most, but also exactly which variables people love or dislike the most in aggregate. Instead of seeing the performance of those variables in isolation, you see how they perform no matter what they were paired with in the ad. You can use this micro-level of creative data to optimize your ad creative for peak performance.

The true value of multivariate testing lies in the depth of creative intelligence it delivers, and the ability to use those learnings in future creative versions.

If you knew that a certain color or image or CTA consistently drove more people to purchase, you could capitalize on that knowledge to optimize your ads until a new winner emerged.

For example, let’s say while concepting an ad campaign, your creative team came up with the following:

- 4 headlines

- 3 images

- 2 background colors

- 3 calls to action

To run a multivariate test against these assets, you’d build an ad for every possible combination — all 72 of them (4x3x2x3) — and test them against each other.

Perhaps you find that one headline outperforms the other three, and one image doubles the click-through rate. You would be sure to use that headline and image in future ad creative.

Best practices for ad testing

In theory, multivariate testing ads is simple — it’s the scientific method applied to creative.

The reality is, building and testing that many ads is no small feat for any marketing or advertising team. The version creating, the data-tracking … it can be mind-boggling.

The following five best practices are crucial to making multivariate testing humanly possible.

Take the time to define your marketing goals

Before you jump headfirst into multivariate ad testing, take some time to understand what you hope to accomplish. What do you want to learn from the test you’re going to run?

Begin your testing strategy with the end in mind so you plan and build your tests more methodically, resulting in more meaningful creative data.

The first step is to answer this question:

What area (or areas) of the brand or business do we most need to affect with better ad creative?

The answer depends greatly on your business’s maturity, overall growth goals, and go-to-market strategy. Here are some examples:

- Prospecting: What works best with current customers isn’t always what works best with new ones. Ad testing can help you find the best possible ad creative to bring new people to your brand.

- Retaining current customers: Once they’ve made their first purchase, run ad tests to find the ad creative that keeps customers coming back.

- Launching new products and product lines: Set your new products and product lines up for success. Find winning ads that drive people to purchase your latest offerings.

- Testing seasonal designs and messages: Find out which ads work best to draw people into your biggest deals and holiday sales of the year.

Start with a solid hypothesis

Without a hypothesis, you lack rationale for which assets you choose to test. This means you can’t categorize your variables in a meaningful way. Your test will lack focus, and your data won’t be as powerful or meaningful as it could be.

Asking yourself, “What do I want to learn here?” is the very first step in kicking off an effective multivariate ad test. A well-thought-out hypothesis will help inform what variables — images, headlines, calls to action, etc. — should be tested in your ad creative.

Here are a few examples:

- I want to see which generates more conversions: images of my product being used by a model or images of the product by itself.

- I want to see if % off or $ off drives more clicks.

- I want to see if dark or light background colors generate more leads.

The goal is to learn one or two things per test. Decide and document what you want to learn. Then choose and test the creative assets that will help you arrive at an answer. A strong hypothesis leads to a more controlled experiment, which leads to clearer results.

Test at scale with automation

Without some sort of automation in place, multivariate testing at any kind of scale is downright painful to try and execute.

Designing and resizing every ad variant manually is tedious, can lead to burnout, and takes away from more strategic creative thinking and ideation.

And manually testing all of those designs? Forget it. It’s cumbersome and difficult to track. Not to mention that paid social platforms throw off the validity of manual testing because they don’t evenly allocate spend across all your versions.

Scale via automation is the key to successful multivariate testing. Automated multivariate ad testing tools do the rote work for you — from generating every possible ad variation to structuring the audience, budget, and placements of a test campaign, including controlling the spend equally across all ad variants.

In short, automation lets you iterate and optimize ad creative at a pace never before possible. Hooray!

Continuously test creative

Multivariate ad testing should be an ongoing process, not a one-time thing. The best way to keep your finger on the pulse of what’s working (and what isn’t) is to continuously test and iterate on your ad creative.

This doesn’t mean you need to run an infinite number of tests. You can and should focus your tests on the areas of your business that will have the biggest impact.

But continuous testing ensures you’re always learning and improving your ad creative to stay ahead of the competition.

Another benefit of continuous ad testing is preventing creative fatigue — the point at which your audience has seen your ad too many times, rendering the ad creative ineffective. Consistently running multivariate ad tests keeps your ad creative fresh, and exposure to each ad low.

Measure success

Your brand's ad testing KPIs are dependent on your marketing goals, testing strategy, and hypothesis. Before launching a test, decide which primary and secondary KPIs you want to test against. The best automated multivariate testing platforms let you choose the KPI you want to measure before launch, including:

- Engagement

- Click-through-rate

- Add-to-cart rate

- Cost per action

- And many more

Any time you find ads or creative elements that perform close to or better than your KPI goals, you should scale them.

How Marpipe can help you test your ad creative

Modular design approach

Modular design is a design approach that uses placeholders within a template to hold space for creative elements to live interchangeably. It's a foundational pillar of designing ads at scale on Marpipe, and what allows each design element to be paired with all other design elements programmatically. This gives you total control over all your variables for an effective test.

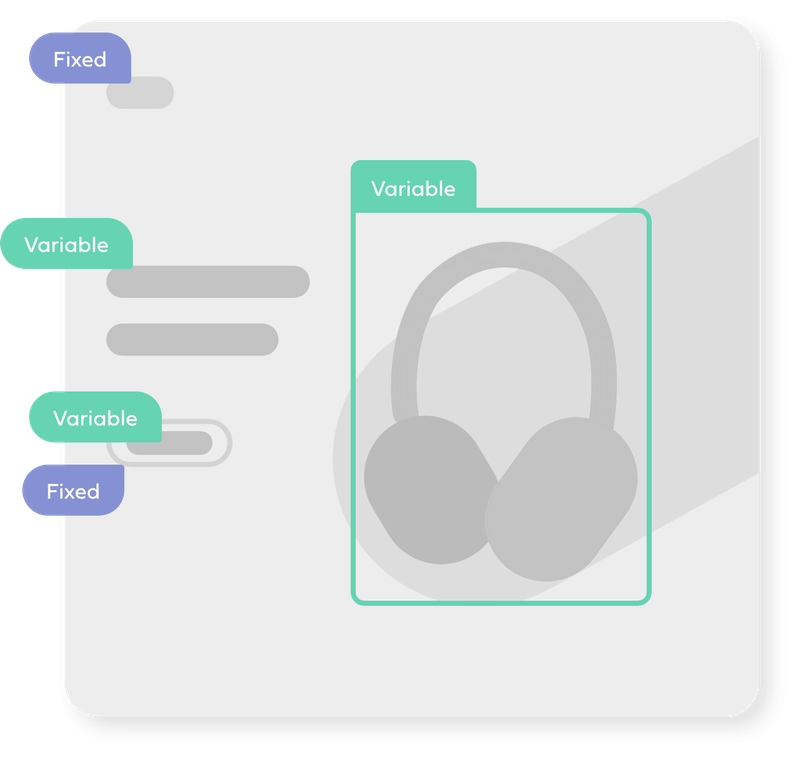

Within Marpipe, you can break your placeholders down into two types:

- Variable: Any placeholder for an asset you’re going to test . These assets should be interchangeable with one another. In other words, you can swap any asset of the same type into the placeholder and the design still works.

- Fixed: Any placeholder staying the same across every ad variation. These assets should work cohesively with your variable assets that are being swapped in automatically. One common example of a fixed asset is your brand logo. It typically stays the same size, color, and location in each variation.

Built-in Confidence Meter

Many advertisers prefer their ad tests to reach statistical significance — or stat sig — before considering them reliable. (Stat sig refers to data that can be attributed to a specific cause and not to random chance.)

Marpipe is is the only automated multivariate ad testing platform with a built-in live statistical significance calculator. We call it the Confidence Meter.

In real-time, it helps you understand:

- whether or not a variant group has reached high confidence

- if further testing for a certain variant group is necessary

- whether repeating the test again would result in a similar distribution of data

- when you have enough information to move on to your next test

Template library

Your ad template is the controlled container inside of which all your variables will be tested. It should be flexible enough to accommodate every creative element you want to test, and yet still make sense creatively no matter the combination of elements inside. (This is where modular design principles become super important!)

Marpipe has more than 130 pre-built modular templates for you to choose from in our library — all based on top-performing ads. Or you can build your own. To make sure your template exhibits the look and feel of your brand, you can upload brand colors, fonts, and logos right into Marpipe for all your tests.

Test budgeting made simple

Your overall ad creative testing budget will determine 1) how long you run your test and 2) your budget per ad group. The larger the per-test budget, the more variables you can include per test.

Marpipe shows you how your budget breaks down before you launch your ad test. So if you create more variants than you have testing budget for, you can simply remove elements and shelve them for a future test. (Vice versa: if you find you haven’t included enough variants to hit your allotted testing budget, you can add variables until you do.)

Marpipe also places every ad variant into its own Facebook ad set, each with its own equal budget. This prevents the platform algorithm from automatically favoring a variant and skewing your test results.

Drive conversions with multivariate testing

Multivariate testing is an essential tool for creating effective ad campaigns at scale. Continuously testing and tweaking your ad creative ensures your ads are optimized for maximum conversion, despite today's landscape of diluted audience targeting.

Marpipe automates the entire multivariate ad testing process, so you can iterate smarter and find winning ads faster than ever before. If you're ready to test your way to top ad performance, we're here to help.